30 KiB

Title: How to build a Low-Tech website: Software & Hardware Date: 2018-9-08 Category: solar server Tags: solar power, static sites, energy optimization, web design Slug: low-tech-website-howto Summary: How to build a low tech website by optimizing web design, server settings and hardware. Author: Roel Roscam Abbing Status: published

[TOC]

Earlier this year we've been asked to help redesign the website of lowtechmagazine.com. The primary goal of the redesign was to radically reduce the energy use associated with accesing their web content. At the same time it is an attempt to find out what a low-tech website could be.

In general the idea behind lowtechmagazine.com is to understand technologies and techniques of the past and combine them with the knowledge of today. Not in order to be able to 'do more with the same', but rather 'to do the same with less'.

In this particular case it means that all the optimizations and increases in efficiency do not go towards making a website which is faster at delivering even more megabytes. Rather it is a website which uses all the advances in technological efficiency, combined with specific hardware and software choices, to radically and drastically cut resource usage. At the same time for us a sustainable web site means ensuring support for older hardware, slower networks and improving the portability and archivability of the blog's content.

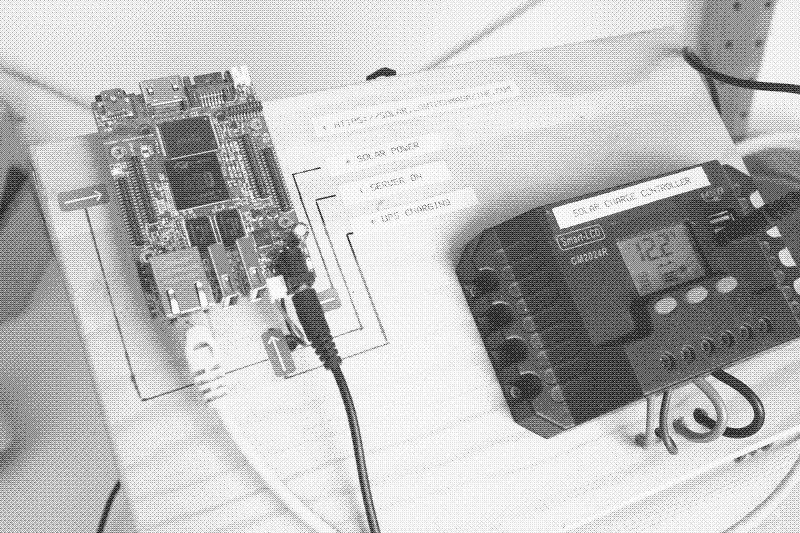

This meant making a website and server which could be hosted from the off-grid solar system used in the lowtechmagazine.com's home-office in Barcelona.

The article 'How To Build A Low-Tech Website?' gives more insights into the motivations on making a self-hosted solar-powered server, while this companion article on will give in-depth technical information.

Both the articles and the readesign should be read as a proposition how things could be done, but also as a question on what could be improved. So we really appreciate additional insights and feedback.

Software

Operating system

The webserver is running on Armbian Stretch, which is a Debian based distribution built around the SUNXI kernel. This is kernel for low-powered AllWinner-based single board computers. The Armbian project provides good documentation on how write an Armbian image to an SD card an boot the board for the first time in the Armbian User Guide.

Pelican Static Site & Design

The main change in the webdesign was to move from a dynamic website based on Typepad1 to a static site generated by Pelican. Static sites load faster and require less processing than dynamic websites. This is because the pages are pre-generated and read off the disk, rather than being generated on every visit.2

You can find the source code for 'solar', the Pelican theme we developed here

Image compression

One of the main challenges was to reduce the overall size of the website. Particularly to try and reduce the size of each page to something less than 1MB . Since a large part of both the appeal and the weight of the magazine comes from the fact it is richly illustrated, this presented us with a particular challenge.

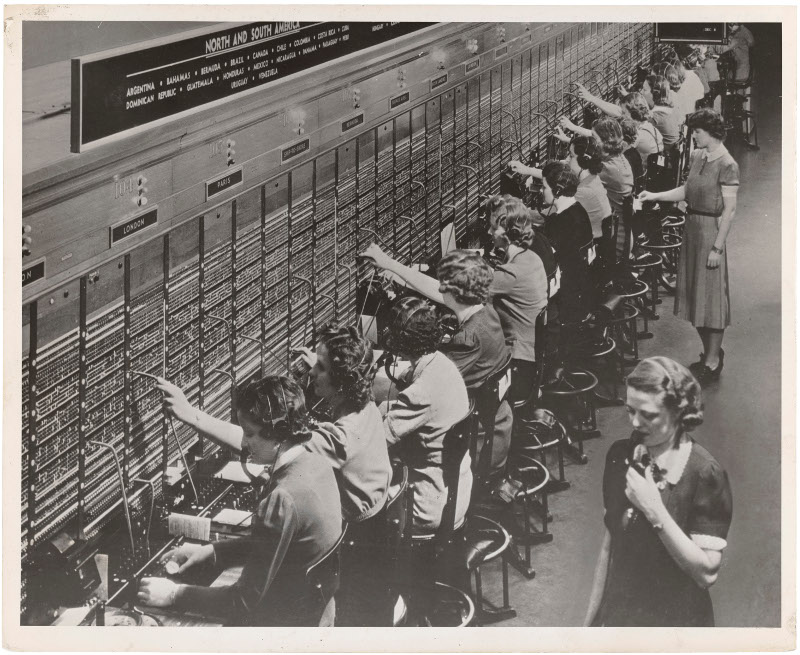

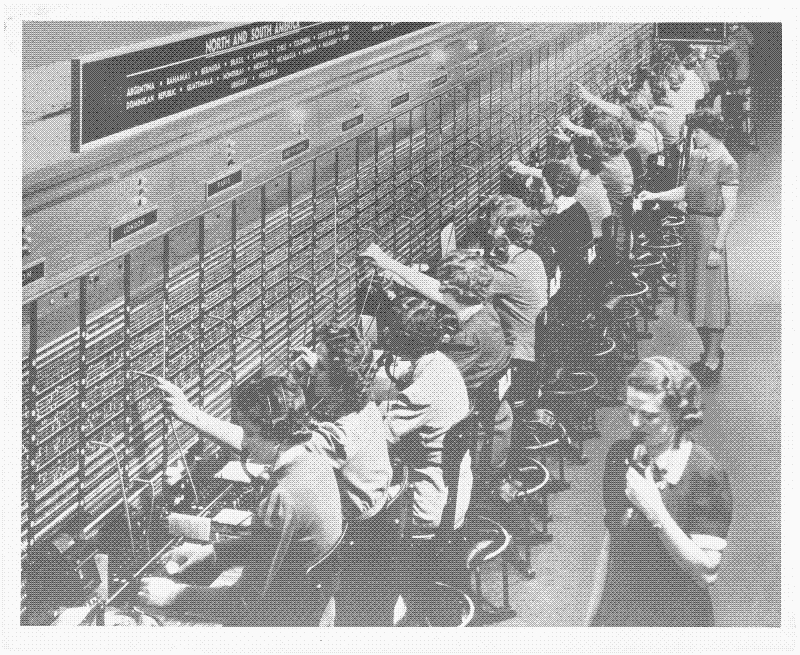

Image from the blog showing 20th century telephone switchboard operators(original source), 159.5KB

Image from the blog showing 20th century telephone switchboard operators(original source), 159.5KB

In order to reduce the size of the images, without diminishing their role in the design and the blog itself, we reverted to a technique called dithering:

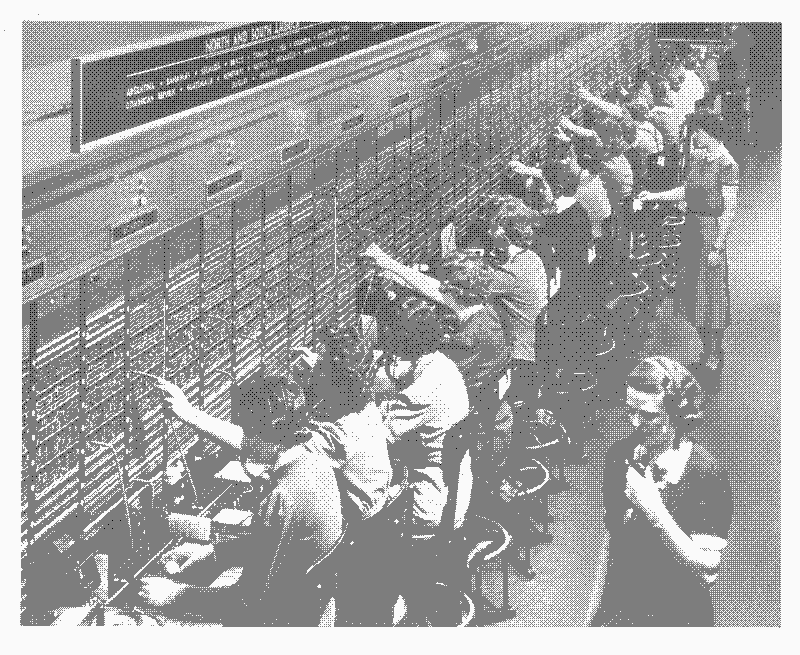

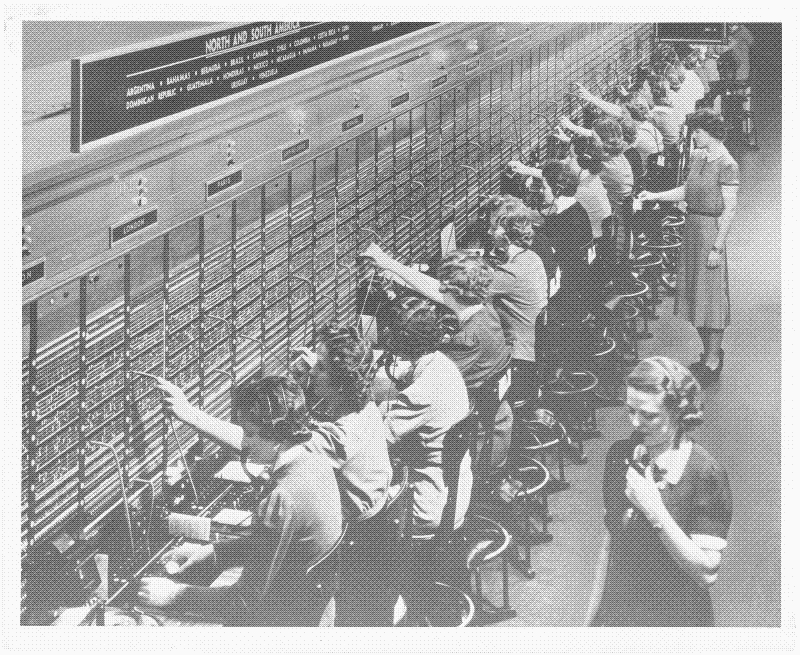

The same image but dithered with a 3 color palette, 36.5KB

The same image but dithered with a 3 color palette, 36.5KB

This is a technique 'to create the illusion of "color depth" in images with a limited color palette'3. It based on the print reproduction technique called halftoning. Dithering, or digital half-toning4, was widely used in video games and pixel art at a time when a limited amount of video memory constrained the available colors. In essence dithering relies on optical illusions to simulate more colors. These optical illusions are broken however by the distinct and visible patterns that the dithering algorithms generate.

Dithered with a six tone palette, 76KB

Dithered with a six tone palette, 76KB

As a consequence most of the effort and literature on dithering is around limiting the 'banding' or visual artifacts by employing increasingly complex dithering algorithms5.

Our design instead celebrates the visible patterns introduced by the technique, this to stress the fact that it is a 'different' website. Coincidentally, the 'Bayesian Ordered Dithering' algorithm that we use not only introduces these distinct visible patterns, but it is also quite a simple and fast algorithm.

Dithered with an eleven tone palette, 110KB

Dithered with an eleven tone palette, 110KB

We chose dithering not only for the compression but also for the aesthetic and reading experience. Converting the images to grayscale and then dithering them allows us to scale them in a visually attractive way to 100% of the view port, despite their small sizes. This gives each article a visual consistency and provides the reader with pauses in the long articles.

To automatically dither the images on the blog we wrote a plugin for pelican that converts all source images of the blog. The plugin is based on the Python Pillow imaging library and hitherdither, a dithering palette library by Henrik Blidh.

Using this custom plug-in we reduced the total weight of the 623 images that are on the blog so far by 89%. From 194.2MB to a mere 21.3MB.

Archiving and portability

Another reason to switch to a static site generator was to be able to ensure an off-line workflow, where the articles can be written and previewed locally in the browser. For this to happen the articles had to be converted to Markdown, a light weight markup language based on plain text files.

While this is quite a bit of work to do with an archive that spans 10 years of writing, it ensures the portability of the archive for future redesigns or other projects. It also makes it possible for us to archive and version the entire blog using the git versioning system.

Off-line archive

Because we designed the system to have an uptime of only 90% it is expected to go off-line 35 days a year.

Increasing the uptime of the server to 99% on solar energy means increasing the website's ecological footprint by adding more and more tech in the form of extra solar panels, massively increased battery capacity or extra servers in different geographic locations.

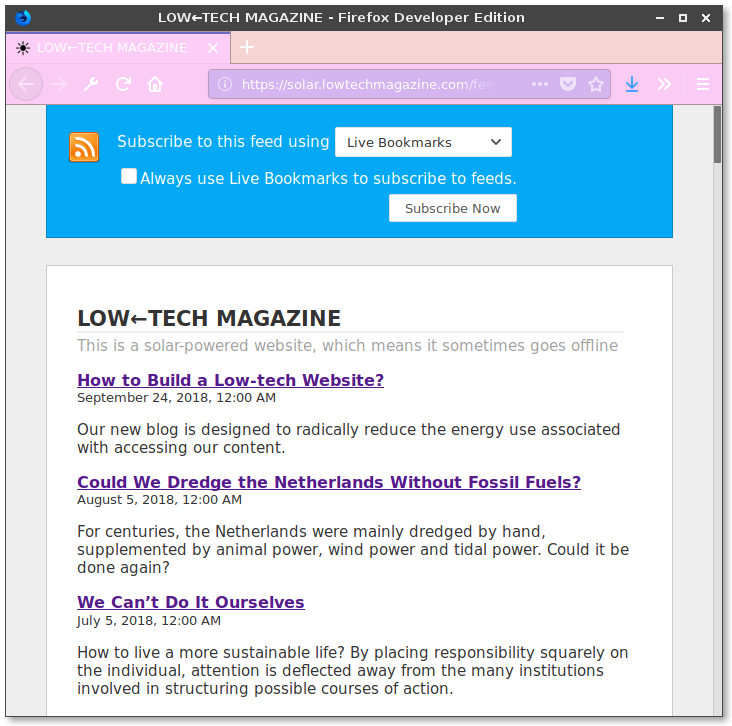

Rather than that we opted for a low-tech approach that accepts being off-line during longer stretches of cloudy weather. However, we wanted to provide the reader with off-line reading options. Our primary method of doing so currently is by providing an RSS feed containing all the articles and images on the site. In the future we will explore other means of providing off-line reading of the magazine.

Most browsers preview only the article titles and summaries of the RSS feed. In fact the feed contains the full articles. Once you add the feed to your favorite reader, it will download the full articles that you can read at any given time.

Most browsers preview only the article titles and summaries of the RSS feed. In fact the feed contains the full articles. Once you add the feed to your favorite reader, it will download the full articles that you can read at any given time.

Material Server

"[...] the minimal file-based website is contrary to a cloud mentality, where the material circumstances of the hardware and hosting location are made irrelevant (for the cloud/vps customer) meaning that any 'service' can be 'deployed', 'scaled' 'migrated' etc. Our approach instead informs what can be hosted based on the material circumstances of the server."6

One of the page's fundamental design elements is to stress the materiality of the webserver. In web design there is a clear distinction between 'front-end', the visual and content side of the website and the 'back-end', the infrastructure it runs on top. Outside of professional circles, the material conditions of the web or the internet's infrastructure are rarely discussed. This has become especially the case with cloud computing as the dominant paradigm, as resources are taken for granted or even completely virtualised.

A low-tech website means this distinction between front-end and back-end needs to disappear as choices on the front-end necessarily impact what happens on the back-end and vice-versa. Ignoring this connection usually leads to more energy usage.

An increase in traffic for example will have an impact on the amount of energy the server uses, just as a heavy or badly designed website will. Part of solar.lowtechmagazine.com's design aims to give the visitor an insight in the amount of power consumed and the potential (un)availability of the page in the coming days, based on current power usage and forecasts of the weather.

The power statistics can actually be queried from the server hardware. More on that below. To make the statistics available to the web site we wrote a bash script that exposes them as JSON in the webroot.

To activate this feature there is a cron entry that runs the script every minute and writes it into the web directory:

:::console

*/1 * * * * /bin/bash /home/user/stats.sh > /var/www/html/api/stats.json

Configuring the webserver

As a webserver we use NGINX to serve our static files. However we made a few non-standard choices to further reduce the energy consumption and page loading times on (recurrent) visits.

To test some of the assumed optimizations we've done some measurements using a few different articles. We've used the following pages:

FP = Front page, 404.68KB, 9 images

WE = How To Run The Economy On The Weather, 1.31 MB, 21 images

HS = Heat Storage Hypocausts, 748.98KB, 11 images

FW = Fruit Walls: Urban Farming in the 1600s, 1.61MB, 19 images

CW = How To Downsize A Transport Network: Chinese Wheelbarrows, 996.8KB, 23 images

For this test the pages which are hosted in Barcelona have been loaded from a machine in the Netherlands. The indicated times are all the averages of 3 measurements.

Compression of transmitted data

We run gzip compression on all our text-based content, this lowers the size of transmitted information at the cost of a slight increase in required processing. By now this is common practice in most web servers but we enable it explicitly. Reducing the amount of data transferred will also reduce the total environmental footprint.

:::console

#Compression

gzip on;

gzip_disable "msie6";

gzip_vary on;

gzip_comp_level 6;

gzip_buffers 16 8k;

gzip_http_version 1.1;

gzip_types text/plain text/css application/json application/javascript text/xml application/xml application/xml+rss text/javascript;

A comparison of the amount of data sent with gzip compression enabled or disabled:

|GZIP | MP | WE | HS | FW | CW |

|----------|----------|----------|----------|----------|----------|

| disabled | 116.54KB | 146.08KB | 127.09KB | 125.36KB | 138.28KB |

| enabled | 39.6KB | 51.24KB | 45.24KB | 45.77KB | 50.04KB |

| savings | 64% | 65% | 66% | 66% | 64% |

Caching of static resources

Caching is a technique in which some of the site's resources, such as style sheets and images, are provided with additional headers that tell the visitor's browser to save a local copy of those files. This ensures that the next time they visit the same page, the files are loaded from the local cache rather than being transmitted over the network again. This not only reduces the time to load the entire page, but also lowers resource usage both on the network and on our server.

The common practice is to cache everything except the HTML, so that when the user loads the web page again the HTML will notify the browser of all the changes. However since lowtechmagezine.com publishes only 12 articles per year, we decided to also cache HTML. The cache is set for one day, meaning it is only after a week that the user's browser will automatically check for new content. Only for the front and about pages this behaviour is disabled.

:::console

map $sent_http_content_type $expires {

default off;

text/html 1d;

text/css max;

application/javascript max;

~image/ max;

}

Concretely this had the following effects:

The first time a page is loaded (FL) it around one second to fully load the page. The second time, however, the file is loaded from the cache and the load time reduced by 40% on average. Since load time are based on the time it takes to load resources over the network and the time it takes for the browser to render all the styling, caching can really decrease load times.

| Time(ms) | FP | WE | HS | FW | CW |

|----------|-------|--------|-------|--------|--------|

| FL | 995ms | 1058ms | 956ms | 1566ms | 1131ms |

| SL | 660ms | 628ms | 625ms | 788ms | 675ms |

| savings | 34% | 41% | 35% | 50% | 40% |

In terms of data transferred the change is even more radical, essentially meaning that no data is transferred the second time a page is visited.

| KBs | FP | WE | HS | FW | CW |

|----------|----------|-----------|----------|-----------|----------|

| FL | 455.86KB | 1240.00KB | 690.48KB | 1610.00KB | 996.21KB |

| SL | 0.38KB | 0.37KB | 0.37KB | 0.37KB | 0.38KB |

| savings | >99% | >99% | >99% | >99% | >99% |

In case you want to force the browser to load cached resources over the network again, do a 'hard refresh' by pressing ctrl+r

HTTP2, a more efficient hyper text transfer protocol.

Another optimization is the use of HTTP2 over HTTP/1.1. This is a relatively recent protocol that increases the transport speed of the data. This increase is the result of HTTP2 compressing the packet data headers and multiplexing multiple requests into a single TCP connection. In other words, it produces less overhead data and needs to opens less connections between the server and the browser.

The effect of this is most notable when the browser needs to do a lot of different requests, since these can all be fit into a single connection. In our case that specifically means that articles with more images will load slightly faster over HTTP2 than over HTTP/1.1.

| | FP | WE | HS | FW | CW |

|----------|-------|-------|-------|-------|-------|

| HTTP/1.1 | 1.46s | 1.87s | 1.54s | 1.86s | 1.89s |

| HTTP2 | 1.30s | 1.49s | 1.54s | 1.79s | 1.55s |

| Images | 9 | 21 | 11 | 19 | 23 |

| savings | 11% | 21% | 0% | 4% | 18% |

Not all browsers support HTTP2 but the NGINX implementation will automatically serve the files over HTTP/1.1 for those browsers.

It is enabled at the start of the server directive:

:::console

server{

listen 443 ssl http2;

}

Serve the page over HTTPS

Even though the website has no dynamic functionality like login forms, we have also implemented SSL to provide Transport Layer Security. We do this mostly to improve page rankings in search engines.

There is something to be said in favour of supporting both HTTP and HTTPS versions of the website but in our case that would mean more redirects or maintaining two versions of the website.

For this reason we redirect all our traffic to HTTPS via the following server directive:

:::console

server {

listen 80;

server_name solar.lowtechmagazine.com;

location / {

return 301 https://$server_name$request_uri;

}

}

Then we've set up SSL with the following tweaks:

:::console

# Improve HTTPS performance with session resumption

ssl_session_cache shared:SSL:10m;

ssl_session_timeout 180m;

SSL sessions only expire after three hours meaning that while someone browses the website, they don't need to renegotiate a new SSL session during this period:

:::console

# Enable server-side protection against BEAST attacks

ssl_prefer_server_ciphers on;

ssl_ciphers ECDH+AESGCM:ECDH+AES256:ECDH+AES128:DH+3DES:!ADH:!AECDH:!MD5;

We use a limited set of modern cryptographic ciphers and protocols:

# Disable SSLv3

ssl_protocols TLSv1 TLSv1.1 TLSv1.2;

We tell the visitors browser to always use HTTPS, in order to reduce the amount of 301 redirects, which might slow down loading times:

:::console

# Enable HSTS (https://developer.mozilla.org/en-US/docs/Security/HTTP_Strict_Transport_Security)

add_header Strict-Transport-Security "max-age=63072000; includeSubdomains";

We enable OCSP stapling which is quick way in which browsers can check whether the certificate is still active without incurring more round trips to the Certificate Issuer. Most tutorials recommend setting Google's 8.8.8.8 and 8.8.4.4 DNS servers but we don't want to use those. Instead we chose some servers provided through https://www.opennic.org that are close to our location:

:::console

# Enable OCSP stapling (http://blog.mozilla.org/security/2013/07/29/ocsp-stapling-in-firefox)

ssl_stapling on;

ssl_stapling_verify on;

ssl_trusted_certificate /etc/letsencrypt/live/solar.lowtechmagazine.com/fullchain.pem;

resolver 87.98.175.85 193.183.98.66 valid=300s;

resolver_timeout 5s;

Last but not least, we set change the size of the SSL buffer to increase to so-called 'Time To First Byte'7 which shortens the amount of time between passing between a click and elements changing on the screen:

:::console

# Lower the buffer size to increase TTFB

ssl_buffer_size 4k;

The above SSL tweaks are heavily indebted to these two articles by Bjorn Johansen and Hayden James

Setting up LetsEncrypt for HTTPS

The above are all the SSL performance tweaks but we still need to get our SSL certificates. We'll do so using LetsEncrypt.

First install certbot:

:::console

apt-get install certbot -t stretch-backports

Then run the command to request a certificate using the webroot authenticator:

:::console

sudo certbot certonly --authenticator webroot --pre-hook "nginx -s stop" --post-hook "nginx"

Use the certonly directive so it just creates the certificates but doesn't touch much config.

This will prompt an interactive screen where you set the (sub)domain(s) you're requesting certificates for. In our case that was solar.lowtechmagazine.com.

Then it will ask for the location of the webroot, which in our case is /var/www/html/. It will then proceed to generate a certificate.

Then the only thing you need to do in your NGINX config is to specify where your certificates are located. This is usually in /etc/letsencrypt/live/domain.name/. In our case it is the following:

:::console

ssl_certificate /etc/letsencrypt/live/solar.lowtechmagazine.com/fullchain.pem;

ssl_certificate_key /etc/letsencrypt/live/solar.lowtechmagazine.com/privkey.pem;

Full NGINX config

Without further ado:

:::console

root@solarserver:/var/log/nginx# cat /etc/nginx/sites-enabled/solar.lowtechmagazine.com

# Expires map

map $sent_http_content_type $expires {

default off;

text/html 7d;

text/css max;

application/javascript max;

~image/ max;

}

server {

listen 80;

server_name solar.lowtechmagazine.com;

location / {

return 301 https://$server_name$request_uri;

}

}

server{

listen 443 ssl http2;

server_name solar.lowtechmagazine.com;

charset UTF-8; #improve page speed by sending the charset with the first response.

location / {

root /var/www/html/;

index index.html;

autoindex off;

}

#Caching (save html pages for 7 days, rest as long as possible, no caching on frontpage)

expires $expires;

location @index {

add_header Last-Modified $date_gmt;

add_header Cache-Control 'no-cache, no-store';

etag off;

expires off;

}

#error_page 404 /404.html;

# redirect server error pages to the static page /50x.html

#error_page 500 502 503 504 /50x.html;

#location = /50x.html {

# root /var/www/;

#}

#Compression

gzip on;

gzip_disable "msie6";

gzip_vary on;

gzip_comp_level 6;

gzip_buffers 16 8k;

gzip_http_version 1.1;

gzip_types text/plain text/css application/json application/javascript text/xml application/xml application/xml+rss text/javascript;

#Caching (save html page for 7 days, rest as long as possible)

expires $expires;

# Logs

access_log /var/log/nginx/solar.lowtechmagazine.com_ssl.access.log;

error_log /var/log/nginx/solar.lowtechmagazine.com_ssl.error.log;

# SSL Settings:

ssl_certificate /etc/letsencrypt/live/solar.lowtechmagazine.com/fullchain.pem;

ssl_certificate_key /etc/letsencrypt/live/solar.lowtechmagazine.com/privkey.pem;

# Improve HTTPS performance with session resumption

ssl_session_cache shared:SSL:10m;

ssl_session_timeout 5m;

# Enable server-side protection against BEAST attacks

ssl_prefer_server_ciphers on;

ssl_ciphers ECDH+AESGCM:ECDH+AES256:ECDH+AES128:DH+3DES:!ADH:!AECDH:!MD5;

# Disable SSLv3

ssl_protocols TLSv1 TLSv1.1 TLSv1.2;

# Lower the buffer size to increase TTFB

ssl_buffer_size 4k;

# Diffie-Hellman parameter for DHE ciphersuites

# $ sudo openssl dhparam -out /etc/ssl/certs/dhparam.pem 4096

ssl_dhparam /etc/ssl/certs/dhparam.pem;

# Enable HSTS (https://developer.mozilla.org/en-US/docs/Security/HTTP_Strict_Transport_Security)

add_header Strict-Transport-Security "max-age=63072000; includeSubdomains";

# Enable OCSP stapling (http://blog.mozilla.org/security/2013/07/29/ocsp-stapling-in-firefox)

ssl_stapling on;

ssl_stapling_verify on;

ssl_trusted_certificate /etc/letsencrypt/live/solar.lowtechmagazine.com/fullchain.pem;

resolver 87.98.175.85 193.183.98.66 valid=300s;

resolver_timeout 5s;

}

Hardware

Server

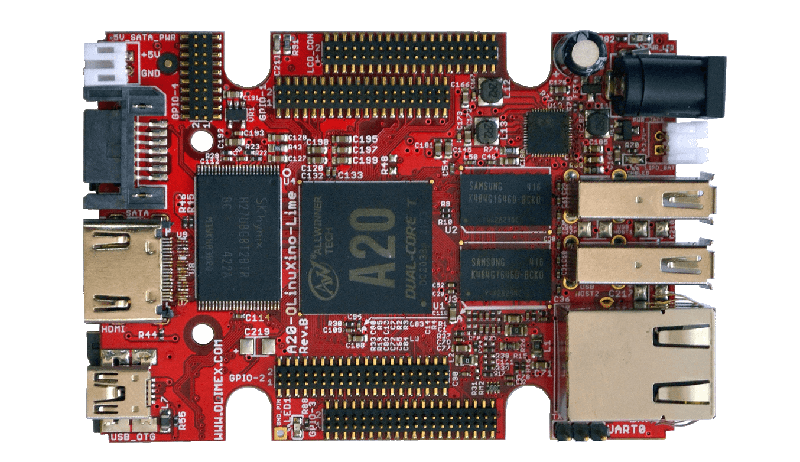

The server itself is an Olimex Olinuxino A20 Lime 2 single board computer.

We chose it because of the quality of the (open source) hardware8, the low power consumption and useful extra components such as the charging circuit based on the AXP209 power managment chip.

This chip makes it possible to query power statistics such as current voltage and amperage both from the DC-barrel jack connection and the battery. The maintainers of Armbian exposed these statistics via sysfs bindings in their OS.

In addition to the power statistics the power chip can charge and discharge a Lithium Polimer battery and automatically switch between the battery and DC-barrel connector. This means the battery can then act as an uninterruptible power supply, which helps prevent sudden shutdowns. The LiPo battery used has a capacity of 6600mAh which is about 24 Watt hour.

The server operating system all runs on an SD-card. Not only are these low-powered but also much faster than hard drives. We use high speed Class 10 16GB mirco-sd card. A 16GB card is actually a bit of an overkill considering the operating system is around 1GB and the website a mere 30MB, but considering the price it doesn't make sense to buy any smaller high-performance cards.

Network

The server gets it's internet access through the existing connection of the home office in Barcelona. This connection is a 100mbit consumer fiber connection with a static IP-adress.

The fiber connection itself is not necessary, especially if you keep your data footprint small, but a fixed IP adress is very handy.

The router is a standard consumer router that came with the internet contract. To make the website available, some settings in the router's firewall had to be changed.

Using a process called 'port forwarding', the following ports had to be forwarded between the external network and the server's local IP address:

Port 80 to 80 for HTTP

Port 443 to 443 for HTTPS

Port 22 to 22 for SSH

Room for improvements?

OS Optimization

While the Armbian operating system has all kinds of optimizations for running on a SoC, there probably are still many tweaks that can be made to lower the energy usage.

For example energy savings from disabling some of the hardware such as the the USB-hub? Some tips or insights into this are greatly appreciated!

Image dithering

We're looking for suggestions how to further compress the images while maintaining this visual quality. We know PNGs are in theory not optimal for representing images on the web, even though they let us limit the color palette to save bandwidth and produce very crisp dithered images.

We've found that saving them as JPEG after dithering in fact increases the file size but perhaps other file formats exist that give is similar quality but have a lighter footprint.

Sensible comments on static sites

Dynamic content such as comments are in theory incompatible with a static site.

At the same time they are a big part of the community of knowledge around lowtechmagazine.com.

The comment box under each article on that site is widely used, but e-mail is equally used (often the comments are then added to the article by the author after moderating).

There are some plugins that might address this such as Bernhard Scheirle's 'Pelican Comment System' but these we haven't tested.

SSL & Legacy browsers

An open question remains: In what a way would it be possible to further extend the support for older machines and browsers without comprimising on security by using deprecated ciphers? Should we maintain both HTTP and HTTPS versions of the site?

Donations

As is mentioned on the 'donate' page of the site, advertising trackers are incompatible with the new web site design and we really want to keep Low-Tech Magazine tracker free and sustainable so if you enjoy our work or find our public research useful please consider donating.

Get in touch

Perhaps you have other issues you'd like to address or get in touch about. You can do so via:

-

Writing solar@lowtechmagazine.com directly.

-

By joining the homebrewserver.club XMPP chatroom

-

By joining the homebrewserver.club mailinglist

-

The advantages of the static website became apparent when on the 26th and 27th of september 2018, the site was on the front page of the popular link aggregator website HackerNews. In a few hours we received more than 500,000 hits but the website and server handled it all fine, never exceeding above 30% CPU utilization. ↩︎

-

https://en.wikipedia.org/wiki/Dither#Digital_photography_and_image_processing ↩︎

-

http://www.efg2.com/Lab/Library/ImageProcessing/DHALF.TXT ↩︎

-

See for example https://web.archive.org/web/20180325055007/https://bisqwit.iki.fi/story/howto/dither/jy/ ↩︎

-

Quote from 'What a website can be' ↩︎

-

For a full description of the board have a look at the manual ↩︎